Making Trustworthiness Operational: The Next Phase of Digital Transformation

Prof. Norbert Pohlmann from the eco Association and Ulla Coester from the Institute for Internet Security outline how trustworthiness in AI must move beyond compliance to become operational practice – and a decisive competitive advantage.

© OpenAI (generated with Whisk)

When we first articulated trustworthiness as a cornerstone of digitalization more than three years ago, the core insight resonated widely: The digital transformation requires trust as well as trustworthiness. The validity of this principle has not changed since then. Why is this so?

The dependence that has arisen between manufacturing and user companies due to the increasing complexity that, in principle, accompanies the use of new technologies is still there, as are the consequences on both sides: on the one hand, regarding the decision-making competence of the user companies, and on the other, with regard to the options for action of the manufacturing companies. Both cannot act sovereignly in the sense that their actions remain without consequences. In concrete terms, this means, for example, that users often must disclose more data when using services against their wishes and have no influence on undesirable uses. On the other hand, users now have more options at their disposal to control and disclose the activities of manufacturers.

It can be deduced from this that efforts to improve the quality of this interrelationship are essential, especially regarding the acceptance of innovative technologies in general and AI solutions in particular. Why? Innovative technologies and thus the entire Internet/IT infrastructure have not only become increasingly complex, but also nearly opaque. This results in a serious dilemma: in contrast to the increasing use, the knowledge about their backgrounds and interrelationships is decreasing. This could lead to the following alternative course of action – either disproportionate rejection of the technology and corresponding services or blind trust. Both are counterproductive in terms of value-creating digitization – the latter does not prevent use in general, but it does prevent meaningful use of new applications or innovative services, since the use was not made based on a high level of decision-making competence and thus sovereignty.

Building trust: The general concept

For this reason, the concept of trust and trustworthiness must be analyzed in more detail in terms of value-adding digitalization. Since trust has a fundamentally positive connotation – according to sociologist Niklas Luhmann, trust is a mechanism for reducing complexity [1] , i.e., something that makes life easier – manufacturers should direct their activities toward building a good relationship of trust with their users. According to Luhmann [2], trust enables an optimistic view of the future, although people basically have neither sufficient information nor the necessary control to justify this optimism. Trust can therefore be described as an adaptive strategy that helps people to remain capable of acting in a world characterized by uncertainty. But the user must receive reliable signals of trustworthiness regarding the competence, goodwill and integrity of the manufacturer.

Trust – as already mentioned, especially in the context of digitization – is only positive if it is not a blind and naive trust. This is countered by the fact that the user must theoretically trust outside expertise – because the capability, knowledge, and intention of the company are not known to him per se. Therefore, first and foremost, the user’s suspicion that the company’s interests have been placed above the user’s interests, or that a company could behave opportunistically, must be dispelled. A corresponding derivation in the context of digitization could thus be: The manufacturer must prove that the user can trust him – or in other words, that he is trustworthy.

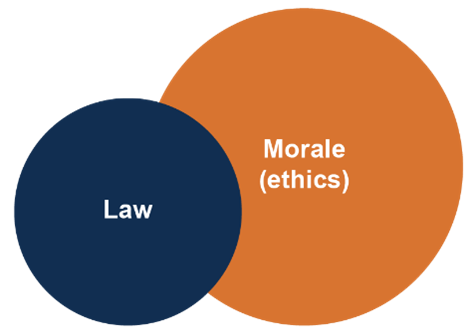

Building trust: Law vs. morale (ethics)

In principle, it could be argued that enough is being done to create trust with the rules and regulations (laws) that already exist. But the boundary conditions – for example, given by the AI Act – are relatively vague and do not provide precise guidelines on how they should be implemented. In addition, on closer inspection, regulations alone are not enough – because AI solutions can still be developed and deployed that are legal but not legitimate in terms of the user but only serve one-sided corporate interests.

Fig. 1. Law and Morale (Ethics)

In order to build trust, it is fundamentally necessary not to allow mistrust to arise. This results in the demand for more transparency for companies, especially in the context of AI – in other words, providing user companies with all the information they need to be able to build trust and thus sovereignty. In this context, it is particularly important for AI companies to state what they do or do not do in the interests of their customers – above and beyond the legal requirements (Fig. 1). Self-limitation – “the ability to limit one’s own freedom, whether in the form of expectations of others or in the form of one’s own actions, in such a way that the use of this freedom does not result in harm” [3] – is a particular expression of companies’ respect for their customers. In terms of digitalization, this requires a consistent sense of responsibility on the part of a company – starting with the management strategy and ending with the developer, who must have the will to make an AI solution fair, transparent and explainable.

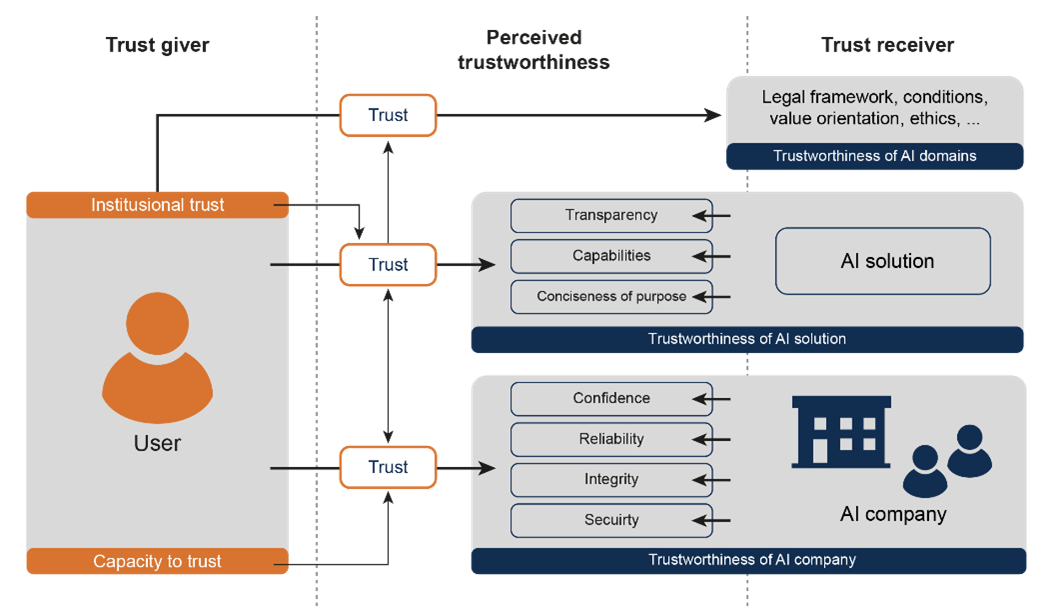

The structure of the model of trustworthiness

The relationships for building trust are shown in the model of trustworthiness (Fig. 2) and are explained below based on the individual factors [4] .

Fig. 2. Model of Trustworthiness

Trustworthiness of AI

Today, the trustworthiness of AI solutions means that the systems are secure by design, resilient in operation and robust, transparent, and accountable. It is not enough for a system to function efficiently; it must also be explainable and meet corresponding standards of reliability.

But when deciding to use new IT technologies, aspects of the respective AI solution are not the only decisive factors. On the contrary: the AI company’s reputation also plays an important role here. Since it is currently apparent that trust in IT technologies, applications, and services is in principle not (yet) fully justified, it is necessary for companies to fulfill additional conditions to increase their level of trustworthiness. To this end, AI companies must make their strategy visible to the outside world. In implementation, this means aligning their actions with the defined criteria of confidence, reliability, integrity, and security. The decisive factor for the definition is that it is possible to rationally assess the company-specific trustworthiness, to make it possible for the user to evaluate the corresponding parameters quickly and easily.

For example, regarding confidence, this is a relevant criterion for trustworthiness. Generally, with regard to functionality, this can be generated by companies having both the capability and the means to provide not only reliable but also secure AI technology, services, and applications.

Or with regard to integrity, which is demonstrated through AI companies considering all the factors that are relevant to trustworthiness, paying particular attention to the ethical dimensions. This means that an AI company, as a trust receiver, is in principle in the position to keep all the promises it has made – and does actually keep them – as well as being generally prepared to take account of both the norms and values of society.

Conclusion: Trustworthiness becomes a competitive advantage

Trustworthiness is not a constraint on innovation; it is an enabler. It provides the stable foundation upon which innovation can occur without eroding confidence or legitimacy. In a digital economy characterized by complexity and uncertainty, trustworthiness becomes the decisive factor that allows digital systems to be widely adopted and socially accepted.

Consequently, it is precisely for this reason that German/European companies could position themselves through their trustworthiness. Simply from the point of view that responsible ethical and thus trustworthy action is not incompatible with economic interests, but – on the contrary – that value-oriented behavior is in the long-term economic interest of companies.

This aspect takes a high priority within the framework of the trustworthiness platform through appropriate design, as here the AI companies comprehensively state on which values both their business activities and the design of their AI solution are based. On the other hand, the user companies can make a good decision in digital sovereignty as to which AI companies and AI solutions are trustworthy.

References

[1] Luhmann, N. (1968): Vertrauen: Ein Mechanismus der Reduktion sozialer Komplexität [Trust: A Mechanism for the Reduction of Social Complexity].

[2] Luhmann, N. (2000): Vertrauen: Ein Mechanismus der Reduktion sozialer Komplexität [Trust: A Mechanism for the Reduction of Social Complexity].

[3] Suchanek, A. (2021): Ethik und Digitalisierung [Ethics and Digitalization]. In Hackspiel-Mikosch, E./Neuhaus, R. (eds.): Ethische Herausforderungen der Digitalisierung und Lösungsansätze aus den

angewandten Wissenschaften [Ethical Challenges of Digitalization and Applied Solutions], Vol. 1, pp. 21–36.

[4] Coester, U., Pohlmann, N. (2022): Vertrauenswürdigkeit schafft Vertrauen – Vertrauen ist der Schlüssel zum Erfolg von IT- und IT-Sicherheitsunternehmen [Trustworthiness Creates Trust – Trust Is the Key to the Success of IT and IT Security Companies]. DuD Datenschutz und Datensicherheit – Recht und Sicherheit in Informationsverarbeitung und Kommunikation, 2/2022.

📚 Citation:

Pohlmann, Norbert, & Coester, Ulla. (February 2026). Making Trustworthiness Operational: The Next Phase of Digital Transformation. dotmagazine. https://www.dotmagazine.online/issues/digital-trust-policy/operational-trustworthiness-ai

Norbert Pohlmann is a Professor of Computer Science in the field of cybersecurity and is Head of the Institute for Internet Security - if(is) at the Westphalian University of Applied Sciences in Gelsenkirchen, Germany, as well as Chairman of the Board of the German IT Security Association TeleTrusT, and a member of the board at eco – Association of the Internet Industry.

Ulla Coester is Project Director of “TrustKI” at the Institute for Internet Security – if(is) at Westphalian University of Applied Sciences in Gelsenkirchen. She is also a Lecturer in Digital Ethics at Fresenius University of Applied Sciences in Cologne, focusing on Corporate Digital Responsibility and the operationalization of trustworthy AI.

FAQ

What does it mean to make trustworthiness operational in AI?

Making trustworthiness operational means embedding trust-focused practices into daily development, governance, and product processes rather than treating trust as an abstract principle. The article by Prof. Norbert Pohlmann and Ulla Coester (dotmagazine, published by eco – Association of the Internet Industry) stresses that trust must be observable and verifiable in practice.

Why is legal compliance not enough to build trust?

The authors argue that legal compliance alone does not guarantee legitimacy or user confidence because regulations like the AI Act can lack detailed implementation guidance. Companies should demonstrate ethical behavior, transparency, and a visible commitment to user interests beyond what the law minimally requires.

What core attributes define trustworthy AI?

Trustworthy AI should be secure by design, resilient in operation, transparent, explainable, and accountable. The article outlines that users need rational signals of reliability and competence, not just functional performance.

How does company reputation affect trustworthiness?

The article explains that trust is influenced by both technical controls and reputation. AI companies should make their principles and practices visible to allow users to assess trustworthiness quickly and confidently.

What is “self-limitation” and why does it matter?

Self-limitation refers to voluntarily constraining a company’s actions to prevent harm and respect user interests beyond legal minimums. The authors describe it as a practical signal of responsibility and ethical respect in AI use and design.

Why is trustworthiness considered a competitive advantage?

The article concludes that trustworthiness supports wider adoption and acceptance of digital systems, enabling innovation without eroding confidence. Responsible practices can thus differentiate organizations in competitive markets.